Legacy On-Prem Application Scenario

We recently “cloudified” a legacy application with one of our large customers which is quite old, based on C++ Windows, and does a lot of complex calculations. Most of the calculations are short-running, but some are long-running. Very long running, to be honest – even up to multiple days. Our mission was to bring this C++ application into the web. Therefore, the calculations were wrapped in an ASP.NET Core application as described by my colleague Pawel Gerr. An Angular-based SPA was built to replace the old Windows UI, and some additional “helper” services were created in order to support the SPA (e.g. user configuration API, authentication via OpenID Connect).

After all parts were built, the customer wanted to run the application in Azure.

Of course, there were some non-functional requirements that we had to follow:

- As few systems as possible should be directly accessible.

- SSL must be used for everything.

- The system should scale flexibly.

- The budget was limited (of course!).

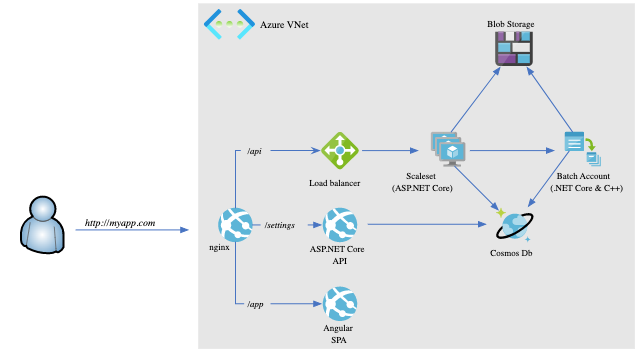

After a block of hands-on research, we came up with the following architecture for proof-of-concept implementation.

Moving to Azure with a Cloud Native Architecture

Since, in the long-term, the customer wants to run the application on Kubernetes, the SPA and its “helper” services were containerized using Docker. For now, we chose to use Azure App Service on Linux to run the container.

Because the C++ application currently does not scale well horizontally, we chose to host it with a Virtual Machine Scale Sets (VMSS) for the short running calculations and with Azure Batch for long-running calculations. Azure Kubernetes Service (AKS) is not yet an optimal solution for the C++ application because Windows containers in AKS need more reserved resources, and the application currently scales vertically simply by leveraging more powerful VMs.

The PoC architecture can be depicted like this:

This distinction of VMSS and Azure Batch gives the customer the possibility to scale independently and to use different VM sizes for different loads. After this decision, the API into the application was split: The ASP.NET Core part runs on VMSS and starts the short running calculations. It also decides when to run long-running calculations and starts them via the backend API on Azure Batch. Azure Blog Storage is used to store the deployment of the backend API.

For data storage, we decided to use Azure Cosmos DB because the data is mostly unstructured.

In front of the entire system, we put an NGINX reverse proxy that also runs in a container using Azure App Service on Linux. We first tried to leverage Azure Application Gateway, but to reduce costs and to simplify SSL deployment, we switched to NGINX. Azure App Services supports Azure managed certificates, where Azure generates and renews free SSL certificates. Unfortunately, this service is not (yet?) supported on Azure Application Gateway.

To secure and limit access to the system, we put everything into a Azure Virtual Network (VNet). The VNet isolates all services, and access to those is only possible within the VNet. NGINX acts as a bridge to allow access from the public Internet to defined endpoints within the VNet.

The following paragraphs describe some learnings we had while building the PoC.

Azure Batch in VNet

Azure Batch is a Platform-as-a-Service (PassS) product that uses a VMSS to execute and distribute the jobs. While it is possible to move the underlying VMSS into a VNet, it is NOT possible to move the Azure Batch service itself into the VNet. This means the Azure Batch service is always reachable from the Internet. Since it does not allow anonymous access, it is not a big issue. For the moment, it was ok for the customer, so we stuck with it.

IaC and Azure Preview Features

We always advise our customers to use infrastructure as code (IaC) to manage and deploy their cloud environments. In this case, we used Terraform. At the time when the system was built, VNet integration for Azure App Services on Linux was in preview. There was no support for it in Terraform. Fortunately, Terraform allows creating custom modules that can use the local-exec provisioner to run command-line tools. We created a custom module for the VNet integration that uses the Azure CLI to assign the Azure App Service to a VNet. Here are the relevant parts of it:

/*

Module to configure vnet-integration on app services.

Currently, this needs to be done via azure cli, since this is not yet supported by the

terraform provider. Ensure to ignore changes to the `virtual_network_name` property of

the `site_config` for the given app service. The property will be changed by the azure

cli and is therefore not tracked by terraform, which causes terraform to delete the

property (and essentially removing the vnet-integration) on reruns/reapplies.

*/

variable "resource_group_name" {

type = string

}

variable "app_service_id" {

type = string

}

variable "app_service_name" {

type = string

}

variable "virtual_network_name" {

type = string

}

variable "virtual_network_subnet_name" {

type = string

}

resource "null_resource" "appservice-vnet" {

triggers = {

app_service_id = var.app_service_id

resource_group_name = var.resource_group_name

app_service_name = var.app_service_name

virtual_network_name = var.virtual_network_name

virtual_network_subnet_name = var.virtual_network_subnet_name

}

provisioner "local-exec" {

command = "az webapp vnet-integration add -g ${var.resource_group_name} -n ${var.app_service_name} --vnet ${var.virtual_network_name} --subnet ${var.virtual_network_subnet_name}"

}

}

The module can then be referenced and be used to integrate Azure App Services in a VNet:

module "app_vnet" {

source = "./az_appservice_vnet"

resource_group_name = azurerm_resource_group.resgrp.name

app_service_id = azurerm_app_service.app.id

app_service_name = azurerm_app_service.app.name

virtual_network_name = azurerm_virtual_networ.vnet.name

virtual_network_subnet_name = azurerm_subnet.appservices.name

}

At the time of writing of this article, this custom module is not necessary anymore because the Azure provider was updated and now supports this feature.

VNet Integration of Linux Containers on App Service

The VNet integration of Linux containers on Azure App Service is a bit tricky. Azure will assign a random port to the container to which the application inside the container has to listen to, to be reachable within the VNet. The port will be passed via an environment variable to the container. We had to customize the Docker image and ASP.NET Core APIs to listen to this port. In Docker, we declared the variable and exposed the port:

ENV PORT=80

EXPOSE $PORT

After that, we changed the Program.cs of the ASP.NET Core APIs to listen to the port.

public static void Main(string[] args)

{

var builder = CreateWebHostBuilder(args);

var port = Environment.GetEnvironmentVariable("PORT");

if (!string.IsNullOrWhiteSpace(port))

{

builder.UseUrls($"http://+:{port}");

}

builder.Build().Run();

}

Conclusion

With clever slicing, adding wrappers, and adding new helper code, legacy applications – even written in C++ – can be made cloud-ready quite easily. Azure provides a lot of services that help with migrations so that a total rewrite is not always needed. Especially Infrastructure as a Service (IaaS) products, such as Virtual Machine Scale Sets, are a big help when it comes to migration of existing applications into the cloud.