Using dynamically created fields or even entities in raw SQL shouldn’t be a problem, but it gets more challenging when working with Entity Framework Core (EF). Two significant issues come into mind: (1) How to tell EF that the model has changed, and (2) How to use the new fields and entities with LINQ.

Source Code: GitHub

Please note: The code in this article is not production-ready but is a proof of concept.

Metamodel for additional fields and entities

The additional fields and entities require a metamodel describing them. This article will concentrate on some basic properties of a field and entity, like the name or the data type. In an actual application, the metamodel itself will most likely be stored in a database. In this article, the metamodel stays in memory to focus on more exciting parts.

public class Metamodel

{

public int Version { get; set; }

public List<AdditionalField> Fields { get; } = new();

public List<AdditionalEntity> Entities { get; } = new();

}

public class AdditionalField

{

public string EntityName { get; set; }

public string PropertyName { get; set; }

public Type PropertyType { get; set; }

public bool IsRequired { get; set; }

public int? MaxLength { get; set; }

}

public class AdditionalEntity

{

public string EntityName { get; set; }

public string TableName { get; set; }

public string? TableSchema { get; set; }

public List<AdditionalField> Key { get; } = new();

public List<AdditionalField> Fields { get; } = new();

}

The property Version of the Metamodel is a simple means for a determination whether the model has changed or not. Alternatively, the version is computed by creating a hash over all entities and fields.

Initial database model

The initial model has one entity Product, which has an Id and a Name. The entity and its properties are a fixed part of our database model.

public class Product

{

public Guid Id { get; }

public string Name { get; set; }

public Product(Guid id, string name)

{

Id = id;

Name = name;

}

}

public class DemoDbContext : DbContext

{

public DbSet<Product> Products { get; set; }

public DemoDbContext(DbContextOptions<DemoDbContext> options)

: base(options)

{

}

protected override void OnModelCreating(ModelBuilder modelBuilder)

{

modelBuilder.Entity<Product>(builder =>

{

builder.HasKey(p => p.Id);

builder.Property(p => p.Name).HasMaxLength(100);

});

}

}

A check that the model is valid and fully functional.

var connString = "...";

var serviceProvider = new ServiceCollection()

.AddDbContext<DemoDbContext>(builder => builder.UseSqlServer(connString))

.BuildServiceProvider();

await ReCreateDatabaseAndFetchProductsAsync(serviceProvider);

static async Task ReCreateDatabaseAndFetchProductsAsync(ServiceProvider provider)

{

await using var scope = provider.CreateAsyncScope();

var dbContext = scope.ServiceProvider.GetRequiredService<DemoDbContext>();

await dbContext.Database.EnsureDeletedAsync();

await dbContext.Database.EnsureCreatedAsync();

var id = new Guid("3CB4A79E-17DF-4F3F-8A5F-62561153E789");

dbContext.Products.Add(new Product(id, "Product"));

await dbContext.SaveChangesAsync();

var products = await dbContext.Products.ToListAsync();

Console.WriteLine(JsonSerializer.Serialize(products));

}

[{"Id":"3cb4a79e-17df-4f3f-8a5f-62561153e789","Name":"Product"}] Reconfiguration of the DbContext

The DbContext gets a reference to the metamodel from the dependency injection to be able to apply changes. The metamodel is a singleton for the sake of simplicity.

var serviceProvider = new ServiceCollection()

.AddSingleton<Metamodel>()

ApplyChanges.

public class DemoDbContext : DbContext

{

public Metamodel Metamodel { get; }

public DbSet<Product> Products { get; set; }

public DemoDbContext(

DbContextOptions<DemoDbContext> options,

Metamodel metamodel)

: base(options)

{

Metamodel = metamodel;

}

protected override void OnModelCreating(ModelBuilder modelBuilder)

{

modelBuilder.Entity<Product>(builder =>

{

builder.HasKey(p => p.Id);

builder.Property(p => p.Name).HasMaxLength(100);

});

Metamodel.ApplyChanges(modelBuilder);

}

}

ApplyChanges iterates over the entities and fields and adds them to the provided ModelBuilder.

public static class MetamodelExtensions

{

public static void ApplyChanges(

this Metamodel metamodel,

ModelBuilder modelBuilder)

{

foreach (var entity in metamodel.Entities)

{

modelBuilder.AddEntity(entity);

}

foreach (var fieldGroup in metamodel.Fields.GroupBy(f => f.EntityName))

{

modelBuilder.Entity(fieldGroup.Key,

builder =>

{

foreach (var field in fieldGroup)

{

builder.AddField(field);

}

});

}

}

private static void AddEntity(this ModelBuilder modelBuilder, AdditionalEntity entity)

{

modelBuilder.Entity(entity.EntityName,

builder =>

{

builder.ToTable(entity.TableName, entity.TableSchema);

foreach (var field in entity.Fields)

{

builder.AddField(field);

}

if (entity.Key.Count == 0)

{

builder.HasNoKey();

}

else

{

builder.HasKey(entity.Key.Select(f => f.PropertyName).ToArray());

}

});

}

private static void AddField(this EntityTypeBuilder builder, AdditionalField field)

{

var propertyBuilder = builder.Property(field.PropertyType, field.PropertyName)

.IsRequired(field.IsRequired);

if (field.MaxLength.HasValue)

propertyBuilder.HasMaxLength(field.MaxLength.Value);

}

}

Changing the model with the current application state won’t affect EF because OnModelCreating is executed just once. EF needs a hint to create a new model when the metamodel changes. A custom implementation of IModelCacheKeyFactory provides this hint.

The DbContext gets a new interface IMetamodelAccessor, for easier access to the metamodel.

public interface IMetamodelAccessor

{

Metamodel Metamodel { get; }

}

public class DemoDbContext : DbContext, IMetamodelAccessor

{

public Metamodel Metamodel { get; }

...

The difference in the custom implementation is the consideration of the metamodel version.

public class MetamodelAwareCacheKeyFactory : IModelCacheKeyFactory

{

public object Create(DbContext context, bool designTime)

{

return context is IMetamodelAccessor metamodelAccessor

? new MetamodelCacheKey(context, designTime, metamodelAccessor.Metamodel.Version)

: new ModelCacheKey(context, designTime);

}

}

public sealed class MetamodelCacheKey : ModelCacheKey

{

private readonly int _metamodelVersion;

public MetamodelCacheKey(DbContext context, bool designTime, int metamodelVersion)

: base(context, designTime)

{

_metamodelVersion = metamodelVersion;

}

protected override bool Equals(ModelCacheKey other)

{

return other is MetamodelCacheKey otherCacheKey

&& base.Equals(otherCacheKey)

&& otherCacheKey._metamodelVersion == _metamodelVersion;

}

public override int GetHashCode()

{

return HashCode.Combine(base.GetHashCode(), _metamodelVersion);

}

}

MetamodelAwareCacheKeyFactory is registered in the Program.cs via ReplaceService.

var serviceProvider = new ServiceCollection()

.AddSingleton<Metamodel>()

.AddDbContext<DemoDbContext>(builder => builder

.UseSqlServer(connString)

.ReplaceService<IModelCacheKeyFactory, MetamodelAwareCacheKeyFactory>())

Another run of the application proofs that the model is still intact.

Accessing dynamically created entities and fields

New properties and entities cannot be accessed the usual way because they don’t exist at development time. For example, the model is changed by adding a new entity ProductType and a new Property Description to the existing entity Product. When the model changes, then it is important to increment the version of the metamodel. The database is updated via hard-coded SQL statements for the sake of simplicity.

Load balancing: Make sure to inform the other servers when the metamodel changes.

await ReCreateDatabaseAndFetchProductsAsync(serviceProvider);

await ChangeModelAsync(serviceProvider);

static async Task ChangeModelAsync(ServiceProvider provider)

{

await using var scope = provider.CreateAsyncScope();

var dbContext = scope.ServiceProvider.GetRequiredService<DemoDbContext>();

var metamodel = scope.ServiceProvider.GetRequiredService<Metamodel>();

metamodel.Version++;

// Add a new field to existing entity

metamodel.Fields.Add(new AdditionalField

{

EntityName = "UserDefinedFieldsAndTables.Database.Product",

PropertyName = "Description",

PropertyType = typeof(string),

MaxLength = 200

});

// Add completely new entity

var productTypeKey = new AdditionalField

{

PropertyName = "Id",

PropertyType = typeof(Guid),

IsRequired = true

};

metamodel.Entities.Add(new AdditionalEntity

{

EntityName = "ProductType",

TableName = "ProductTypes",

Key = { productTypeKey },

Fields =

{

productTypeKey,

new AdditionalField

{

PropertyName = "Name",

PropertyType = typeof(string),

MaxLength = 100

}

}

});

dbContext.Database.ExecuteSqlRaw(@"

ALTER TABLE Products ADD Description NVARCHAR(200);

CREATE TABLE ProductTypes

(

Id UNIQUEIDENTIFIER PRIMARY KEY,

Name NVARCHAR(100) NOT NULL

);

");

dbContext.Database.ExecuteSqlRaw(@"

UPDATE Products

SET Description = 'Product description';

INSERT INTO ProductTypes (Id, Name)

VALUES ('5B3F23F9-9D97-42A2-99F2-1D19710E6690', 'ProductType');

");

}

The filtering, sorting and selection of newly created property Description requires using the method EF.Property. This method may look cumbersome at first, but it doesn’t matter much because no developer will write this code manually. Since the property is created dynamically, the queries also are created dynamically.

If the product is loaded as is, i.e. without Select, then the description will be loaded as a shadow property.

await ReCreateDatabaseAndFetchProductsAsync(serviceProvider);

await ChangeModelAsync(serviceProvider);

await AccessDescriptionAsync(serviceProvider);

static async Task AccessDescriptionAsync(ServiceProvider provider)

{

await using var scope = provider.CreateAsyncScope();

var dbContext = scope.ServiceProvider.GetRequiredService<DemoDbContext>();

var descriptions = await dbContext.Products

.Where(p => EF.Property<string?>(p, "Description") != null)

.OrderBy(p => EF.Property<string?>(p, "Description"))

.Select(p => EF.Property<string?>(p, "Description"))

.ToListAsync();

// output: ["Product description"]

Console.WriteLine(JsonSerializer.Serialize(descriptions));

var product = await dbContext.Products.SingleAsync();

// output: {"Id":"3cb4a79e-17df-4f3f-8a5f-62561153e789","Name":"Product"}

Console.WriteLine(JsonSerializer.Serialize(product));

var description = dbContext.Entry(product).Property<string>("Description").CurrentValue;

// output: Product description

Console.WriteLine(description);

}

The generated SQL statements for the description don’t differ from non-dynamic properties.

-- 1st query

SELECT [p].[Description]

FROM [Products] AS [p]

WHERE [p].[Description] IS NOT NULL

ORDER BY [p].[Description]

-- 2nd query

SELECT TOP(2) [p].[Id], [p].[Description], [p].[Name]

FROM [Products] AS [p]

The handling of dynamically added entities is very similar. These so-called property-bag-entity-types are materialized into a Dictionary<string, object> because there are no implementations for them. The string ProductType, provided to the method Set, is the entity name specified in the metamodel.

await ReCreateDatabaseAndFetchProductsAsync(serviceProvider);

await ChangeModelAsync(serviceProvider);

await AccessDescriptionAsync(serviceProvider);

await AccessProductTypeAsync(serviceProvider);

static async Task AccessProductTypeAsync(ServiceProvider provider)

{

await using var scope = provider.CreateAsyncScope();

var dbContext = scope.ServiceProvider.GetRequiredService<DemoDbContext>();

var names = await dbContext.Set<Dictionary<string, object>>("ProductType")

.Where(p => EF.Property<string?>(p, "Name") != String.Empty)

.OrderBy(p => EF.Property<string?>(p, "Name"))

.Select(p => EF.Property<string?>(p, "Name"))

.ToListAsync();

// output: ["ProductType"]

Console.WriteLine(JsonSerializer.Serialize(names));

var productTypes = await dbContext.Set<Dictionary<string, object>>("ProductType")

.ToListAsync();

// [{"Id":"5b3f23f9-9d97-42a2-99f2-1d19710e6690","Name":"ProductType"}]

Console.WriteLine(JsonSerializer.Serialize(productTypes));

}

Cleanup of the cache

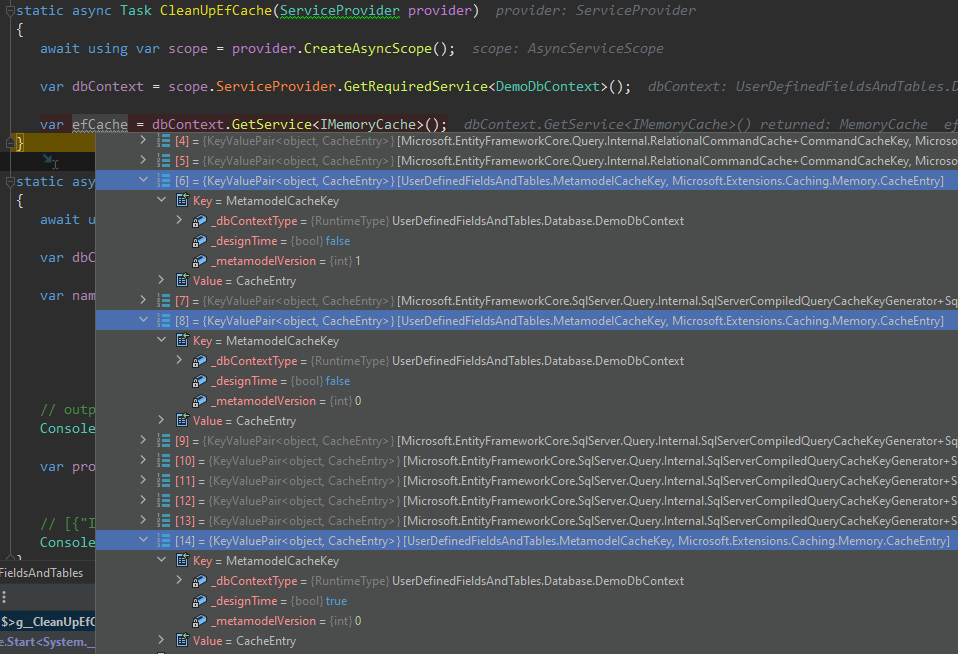

With every change of the metamodel comes a new EF model into the cache. Depending on the frequency of the changes, sooner or later, the EF cache gets too big. The screenshot below shows the state of the cache after changing the metamodel once. We have 3 MetamodelCacheKeys, 1 with designTime=true and 2 with false. The latter have different metamodelVersion, i.e. one of the EF models is the old one, the other the new one. If no running requests using the old model anymore, then this model is removed from the cache to prevent a waste of memory.

One approach is to fetch the current cache key before changing the metamodel to remove it later from the cache. The model is probably the largest object in the cache; still, there can be other items that aren’t necessary anymore. Alas, we don’t know which ones can be deleted safely. One approach could be to use the method MemoryCache.Compact(percentage) to remove the oldest cache keys if the cache gets too big.

await ReCreateDatabaseAndFetchProductsAsync(serviceProvider);

var oldCacheKey = await ChangeModelAsync(serviceProvider);

await AccessDescriptionAsync(serviceProvider);

await AccessProductTypeAsync(serviceProvider);

// X hours later...

await CleanUpEfCache(serviceProvider, oldCacheKey);

static async Task CleanUpEfCache(ServiceProvider provider, object oldCacheKey)

{

await using var scope = provider.CreateAsyncScope();

var dbContext = scope.ServiceProvider.GetRequiredService<DemoDbContext>();

var efCache = dbContext.GetService<IMemoryCache>();

efCache.Remove(oldCacheKey);

}

static async Task<object> ChangeModelAsync(ServiceProvider provider)

{

await using var scope = provider.CreateAsyncScope();

var dbContext = scope.ServiceProvider.GetRequiredService<DemoDbContext>();

var metamodel = scope.ServiceProvider.GetRequiredService<Metamodel>();

var cacheKey = dbContext.GetService<IModelCacheKeyFactory>().Create(dbContext, false);

...

return cacheKey;

}

Dynamic code compilation

Suppose, for some reason, the usage of EF.Property or property-bag-entity-types is not possible. In that case, there is probably no other choice than to generate and compile new classes at runtime. With some architectural changes, the metamodel can add new or replace existing entities compiled by the Roslyn compiler (CSharpCompilation) or emitted by ILGenerator.

Summary

From time to time, the customers ask for the best approach to store additional fields for an existing entity in a relational database. Although there are some, they are limited and lead to worse performance or increased resource consumption. Luckily, most of the projects are not noticeably affected by the drawbacks, and for the others, we may have a solution.