Article Series

Canvas: A Bitmap for the Web

First, you need an element to draw on. Fortunately, the web provides a canvas element for this purpose. It was introduced with HTML5, and support for it is shipped in all major browsers for over a decade now. It provides a two-dimensional bitmap that can be drawn on using a low-level JavaScript API.

<canvas class="main" width="300" height="300" style="image-rendering: pixelated"></canvas>

A canvas has a certain width and height, specified in logical pixels via HTML attributes. By using CSS, you can specify the width and height of the canvas element in CSS pixels. By combining the two, you can support high-definition (“retina”) displays that use more than one physical for showing an actual CSS pixel. When Paint was created, high DPI screens weren’t a thing, so only logical pixels will be used. By setting the CSS property image-rendering to pixelated, drawings will appear pixelated on high DPI screens and not blurry.

Contexts: Choose the Right Tool for the Right Job

To draw on the canvas, you can make use of different rendering contexts. They provide a set of tools to set the pixels of the canvas. The WebGL 2.0 and WebGL contexts can be used to render 3D visualizations to the canvas, while the 2D context provides 2D operations such as drawing a rectangle or a line. To obtain a context, you call the getContext() method and pass the context identifier to it:

const canvas = this.shadowRoot.querySelector('canvas.main') as HTMLCanvasElement;

const context = canvas.getContext('2d');

Our Paint sample makes use of the 2D drawing context. As most of Paint’s tools don’t support anti-aliasing, the clone will primarily use the fillRect() method to fill entire pixels. The following code is called in order to clear all pixels on the canvas:

context.fillStyle = colors.secondary;

context.fillRect(0, 0, canvas.width, canvas.height);

Moreover, the clone makes use of Jack Bresenham’s algorithms to paint rasterized lines and ellipses.

Speed up Drawing with a Desynchronized Canvas

For additional drawing performance, I activated low-latency rendering. Per default, the canvas synchronizes with the DOM on graphics updates. This can cause latencies leading to problems with eye-hand coordination, thus making drawing more difficult.

By enabling low-latency rendering, DOM synchronization is skipped, which leads to a more natural experience when drawing. At the time of this writing, low-latency rendering is only supported in Google Chrome for ChromeOS and Windows. Opting-in is very easy: When retrieving the context, you can pass different parameters in a configuration object. To enable low-latency rendering, you have to set the desynchronized hint to true.

const context = canvas.getContext('2d', { desynchronized: true });

If the browser or platform does not support low-latency rendering, the hint will simply be ignored. Although low-latency rendering is not available on Android and iOS, I didn’t notice any performance penalties there.

Pointer Events: Pen, Finger, and Mouse under One Roof

With the introduction of Windows 8, Microsoft implemented the so-called Pointer Events in Internet Explorer 10. Pointer Events provide an abstract interface for recognizing user input from various pointing devices, such as a mouse, pen, or touchscreen. Before Pointer Events, developers had to register for events from different input devices separately. Pointer events are supported by all major browsers for some time. As the Paint clone should be truly cross-platform, all different pointing devices should be supported.

The clone registers for Pointer Events to recognize input from all pointing devices at once: The pointerdown event is raised when the finger or pen was placed on the screen, or a mouse button was pressed. The pointermove event is called when the finger, pen, or mouse is moved across the screen. Finally, the pointerup event is raised when the user lifts their finger or pen again or the pressed mouse button is released.

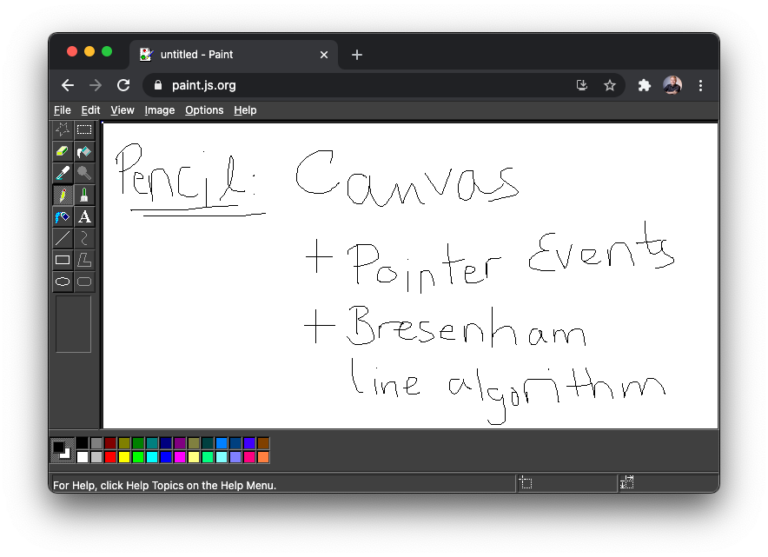

With this article’s knowledge, you should now understand the implementation of the Pencil tool from the code below. The line() method implements Bresenham’s line algorithm noted above.

export class PencilTool implements Tool {

private previous: Point = { x: 0, y: 0 };

onPointerDown(x: number, y: number, { context }: DrawingContext, color: ToolColor): void {

if (context) {

context.fillStyle = color.stroke.value;

context.fillRect(x, y, 1, 1);

this.previous = { x, y };

}

}

onPointerMove(x: number, y: number, { context }: DrawingContext): void {

line(this.previous.x, this.previous.y, x, y, (x, y) => {

context?.fillRect(x, y, 1, 1);

});

this.previous = { x, y };

}

}

By combining the HTML canvas element, the 2D drawing context, and Pointer Events, the core functionality of the Paint clone can be implemented. Now that you can actually draw paintings, it makes sense to share them with other applications.